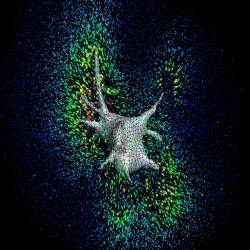

E. coli cells are manipulated on a silicon chip by MIT researchers using 'optical tweezers' to form the letters 'MIT.'

E. coli cells are manipulated on a silicon chip by MIT researchers using 'optical tweezers' to form the letters 'MIT.'

Tool could manipulate tiny objects on a chip,

In a feat that seems like something out of a microscopic version of Star Trek, MIT researchers have found a way to use a "tractor beam" of light to pick up, hold and move around individual cells and other objects on the surface of a microchip.

The new technology could become an important tool for both biological research and materials research, say Matthew J. Lang and David C. Appleyard, whose work is being published in an upcoming issue of the journal Lab on a Chip. Lang is an assistant professor in the Department of Biological Engineering and the Department of Mechanical Engineering. Appleyard is a graduate student in Biological Engineering.

The idea of using light beams as tweezers to manipulate cells and tiny objects has been around for at least 30 years. But the MIT researchers have found a way to combine this powerful tool for moving, controlling and measuring objects with the highly versatile world of microchip design and manufacturing.

Optical tweezers, as the technology is known, represent "one of the world's smallest microtools," says Lang. "Now, we're applying it to building [things] on a chip."

Says Appleyard, "We've shown that you could merge everything people are doing with optical trapping with all the exciting things you can do on a silicon wafer … There could be lots of uses at the biology-and-electronics interface."

For example, he said, many people are studying how neurons communicate by depositing them on microchips where electrical circuits etched into the chips monitor their electrical behavior. "They randomly put cells down on a surface, and hope one lands on [or near] a [sensor] so its activity can be measured. With [our technology], you can put the cell right down next to the sensors." Not only can motions be precisely controlled with the device, but it can also provide very precise measurements of a cell's position.

Optical tweezers use the tiny force of a beam of light from a laser to push around and control tiny objects, from cells to plastic beads. They usually work on a glass surface mounted inside a microscope so that the effects can be observed.

But silicon chips are opaque to light, so applying this technique to them is not an obvious move, the researchers say, since the optical tweezers use light beams that have to travel through the material to reach the working surface. The key to making it work in a chip is that silicon is transparent to infrared wavelengths of light--which can be easily produced by lasers, and used instead of the visible light beams.

To develop the system, Lang and Appleyard weren't sure what thickness and surface texture of wafers, the thin silicon slices used to manufacture microchips, would work best, and the devices are expensive and usually available only in quantity. "Being at MIT, where there is such a strength in microfabrication, I was able to get wafers that had been thrown out," Appleyard says. "I posted signs saying, 'I'm looking for your broken wafers'."

After testing different samples to determine which worked best, they were able to order a set that was just right for the work. They then tested the system with a variety of cells and tiny beads, including some that were large by the standards of optical tweezer work. They were able to manipulate a square with a hollow center that was 20 micrometers, or millionths of a meter, across--allowing them to demonstrate that even larger objects could be moved and rotated. Other test objects had dimensions of only a few nanometers, or billionths of a meter. Virtually all living cells come in sizes that fall within that nanometer-to-micrometers range and are thus subject to being manipulated by the system.

As a demonstration of the system's versatility, Appleyard says, they set it up to collect and hold 16 tiny living E. coli cells at once on a microchip, forming them into the letters MIT.

The work was supported by the Biotechnology Training Program of the National Institutes of Health, the W.M. Keck Foundation and MIT's Lincoln Laboratory.

'

' The ConBrio 200R, one of four that exist in the world.

The ConBrio 200R, one of four that exist in the world.